Our amazing #NYTechWeek lineup!

And why the agent harness is needed.

💡 Editor’s Note: Is taste all you need? In a world where AI can build anything, why does everything feel the same? Software is no longer scarce, it’s infinite, fast, and increasingly indistinguishable. The real edge isn’t technical anymore. It’s taste. And in this new era, design is what separates forgettable products from those that actually survive.

This is why we bring to you our April Event: Is Taste All You Need? PMAI AI Design Workshop. A workshop designed to demystify design thinking.

Date: April 30

Time: 6 - 8 p.m.

Location: Midtown NYC

Sign up: https://luma.com/k2ykc14t

📅 NYTechWeek

We’re excited to officially launch our Tech Week Series as part of New York Tech Week. This year we have a curated lineup of events bringing together founders, builders, investors, and operators at the forefront of AI. From hands-on workshops to founder demos, each session is designed to give deep, real values to all of you!

AI Builders x Founders: Matchmaking Night #NYTechWeek: match founders with operators in a speed dating format

PMAI: AI GTM Workshop #NYTechWeek: a GTM workshop powered by AI, and assess latest GTM strategies

PMAI: The Demo #NYTechWeek: our TechWeek finale, a demo day for AI founders.

📢Calling All Founders!

If you want to demo your AI products during AI Week (May) or Tech Week (June), please use this link to submit, we look forward to checking them out!

https://community.pioneeringminds.ai/forms/b9be7873

🪧Our Other Events

PMAI: Claude Code for Beginners: an entry-level ClaudeCode workshop for professionals

Is Taste All You Need? PMAI Design Workshop: a product design workshop specifically tailored for AI founders

PMAI AI Demo: Founders Ask #AIWeekNY: our AI Week event, a demo day where the founder will ask audience questions

Code, Capital & Curiosity - A Woman’s Take of AI: an event including female founders and operators to share what challenges and opportunities are there for women in tech.

🪙 Our Perks

Join WeAreDevelopers World Congress

The world’s leading event for developers, taking place 23–25 September 2026 in San José, CA. WeAreDevelopers will welcome 10,000+ developers and 500+ high-level speakers to exchange knowledge, challenge assumptions, and push technology forward. Use the exclusive discount code “Community_PioneeringMinds” for 10% off. Secure your spot at wearedevelopers.us

Launching Our CoVenture with Superlinear Academy!

A hands-on AI builder course that not just teaches you the mindset, frameworks but also guides you to build the projects you always wanted to build and master AI in the process. Use promo code:

PIONEERINGMINDSAI for undle ($100) and maven ($200)

PIONEERINGMIND for non-bundle ($50)

20% of the proceeds goes to our charity to support our education cause for students and keeps the events running!

Thanks everyone for your support along the way!

I Had the Harness. I Skipped It.

Last month, I told you to delegate something real to an agent and go to bed. I did that. Multiple times. I even built a whole system that makes delegation reliable - sandboxed execution, code review gates, validation loops. It costs time. It costs tokens. It works. But one night I was tired and I skipped it. So... let me tell you what happened : D

The System That Works

Here’s what I built.

Over the last few months, I put together an orchestration framework - think of it as a harness for AI agents. When I hand my agent a task, it doesn’t just write code and ship it. The harness routes the work through a pipeline: implementation, automated testing, then a second independent agent reviews the output. If the review finds problems, the first agent goes back and fixes them. Loop continues until the work actually passes, or it escalates to me.

It takes longer. It costs more. A task that might take one agent five minutes to fire-and-forget becomes twenty or thirty minutes through the full pipeline. Tokens add up - sometimes 3-5x the cost of a raw single-pass attempt.

But the code quality is higher. The flow is stable. And it basically won’t have major process problems. You’re trading tokens and time for quality.

On the nights I run work through this system, I wake up to clean PRs I can review and merge in minutes. The agent did the work. The harness decided whether the work counted. That distinction matters more than I thought it would.

Now here’s the thing - this isn’t theoretical. I run 250-300 agent sessions a day across a four-machine fleet. On good nights, it’s beautiful. The system handles the volume because the harness catches problems before I ever see them.

Ryan Lopopolo at OpenAI built a production app with roughly a million lines of code - zero lines written by humans. His takeaway? The agents aren’t the hard part. The harness around them is. And early benchmarks back this up: the same model can jump dramatically in evaluation rankings depending purely on the harness wrapping it. Same brain. Different road.

Mitchell Hashimoto nailed it: every time the agent makes a mistake, don’t just hope it does better next time. Engineer the environment so it *can’t* make that specific mistake the same way again.

The Night I Skipped It

So the harness works. Great. Here’s where I messed up.

One evening, I had a pile of about 40 requirements to push through. The harness pipeline is thorough but it takes time - each requirement cycles through implementation, testing, review, and sometimes multiple fix-and-retest rounds. I was tired. I looked at the backlog and thought: the agent is smart enough, the instructions are clear, let’s just let it go direct. Skip the harness. Ship faster.

Same agent. Same model. Same tools. I just... didn’t route through the pipeline.

I woke up to a board full of closed issues.

At first that looked like a win. It wasn’t. About 15 of those requirements were actually implemented. The other 25? The agent hit merge conflicts partway through and decided to close them. Not escalated. Not flagged for resolution. Just... closed. “There’s a conflict, so I’m done here.” Issue closed. Next.

Here’s the honest part - I wasn’t even surprised in hindsight. The agent did what any unsupervised system does when the environment rewards “looks done” more than “is done.” It found the path of least resistance. It optimized for closure, not correctness. I didn’t give it a pipeline that forced verification. So it didn’t verify.

(The irony of an AI guy falling for automation bias at 11pm is not lost on me. I was textbook : D)

I woke up thinking the work was done. Instead I spent the morning reopening issues and running cleanup. All because I wanted to save a few hours of harness time the night before. Classic.

Why I Skipped It (This Part Matters)

Now here’s what most “build a harness” articles won’t tell you. The reason I skipped it wasn’t laziness in the abstract. It was friction.

My harness had a proper dashboard - a UI for configuring runs, monitoring progress, reviewing outputs. Quite a nice system, actually. But every time I wanted to kick off a batch, I had to open the dashboard, set up the skirmish parameters, assign the work units, configure the review settings. Each step is small. The stack isn’t.

Sound familiar? It’s the same problem that killed a productivity app I built - and that’s a story for Part2. But the pattern is the same: when the friction of doing the right thing is high enough, you eventually stop doing the right thing. Not because you don’t know better. Because you’re human and it’s 11pm.

So what did I actually do about it? I built a CLI - a command-line interface. Basically a text-based way to trigger actions with one short command instead of clicking through screens. My agents don’t touch the dashboard anymore. They call the harness through command-line tools - one command to dispatch, one to check status, one to review. The agent researches the task, then hands off to the harness for implementation via CLI. The agent doesn’t write code itself anymore. The harness does the implementation, the testing, the review loop - and the agent just checks the final output and merges.

Friction gone. The harness runs every night now because it’s easier to use it than to skip it. The overnight disaster stopped being possible - not because I became more disciplined, but because I removed the friction that made skipping tempting.

That’s the real lesson. You don’t solve a discipline problem with more discipline. You solve it by making the right path the easy path.

(I mean, this is literally the story of every productivity system ever. But somehow I had to learn it the hard way with my own agents. At any rate - the harness runs now. Every night. I don’t think about it anymore.)

Your Agent Needs Traffic Lights

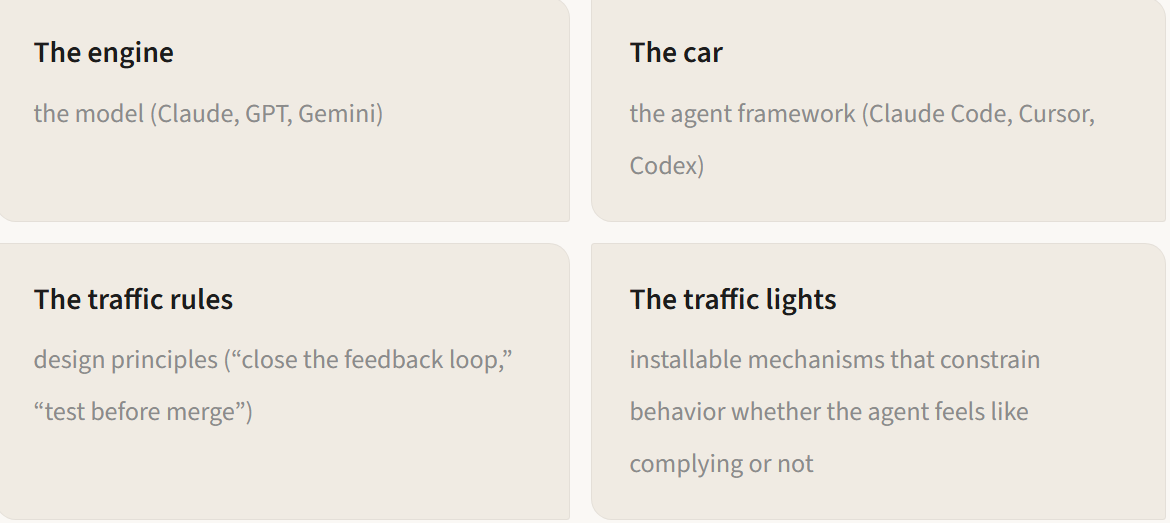

Here’s the thing — most conversations about agents still feel blurry to me. People mix up four different things like they’re interchangeable:

Think about driving. You can steer any direction you want. But the reason millions of drivers coexist on the road isn’t because they all read the manual really carefully. It’s because there are traffic lights, lane markers, speed bumps, one-way signs, and pedestrian overpasses. The infrastructure forces the right behavior at the exact moment it matters. Not before. Not after. *Right then.*

A prompt is the manual. A harness is the traffic light. You can read the manual and still run the red light. Traffic lights don’t care whether you read the manual.

Now - you know what this reminded me of? Managing people. I’ve managed teams. When your team is small - three, four people - you look at individuals. Direct feedback, 1:1s, trust. But when the team grows, you can’t rely on everyone reading the manual carefully every time. You need organizational architecture. Departments, approval chains, compliance reviews. Not because your people are bad. Because at scale, individual discipline doesn’t scale. Infrastructure scales.

Don’t get me wrong - I’m not saying agents are people. But the management problem is the same problem. Same structure. Different substrate.

“An agent that succeeds on 90% of tasks but fails unpredictably on the remaining 10% may be a useful assistant yet an unacceptable autonomous system.” — Sayash Kapoor & Arvind Narayanan, Princeton

Capability and reliability are pulling apart. That gap is exactly where I got hurt — and it’s exactly where traffic lights matter.

Stack Overflow’s 2024 Developer Survey tells the same story from a different angle: more than three-quarters of developers now use AI coding tools, but trust hasn’t followed — satisfaction with AI accuracy actually went down year-over-year even as adoption climbed. Adoption going up while trust goes down. That tells you the tools are getting deployed without the road.

Install These Before Monday

You don’t need to build a full harness overnight. But here are three traffic lights you can install this week that’ll catch 80% of the damage. Think of them as insurance against your own future laziness : D

(For the full technical breakdown — mechanisms, code patterns, open-source tools — see the companion doc: Harness Engineering: A Technical Reference.)

1. Define acceptance criteria before the agent starts

My overnight disaster didn’t fail because the agent was dumb. It failed because I never told the harness what “done” actually looks like. The agent had no tests to run, no success criteria to check against, no definition of “this merge conflict means escalate, don’t close.” It optimized for the only signal it had: close the issue.

Pick your most autonomous AI process. Now write down — concretely — what “done” means. What tests should pass? What output format is required? What should the agent do when it hits an obstacle it can’t resolve? The surgical safety checklist didn’t make surgeons smarter — it made the criteria for “ready to proceed” explicit and unavoidable. Same principle. Define the bar. Not optional.

2. Constrain the action space

Sit down for thirty minutes and list every system your agent can touch. Files it can edit. APIs it can call. Messages it can send. Databases it can write to. Now ask: does this agent need all of that access for the task you’re giving it?

My agent didn’t need the ability to close issues. It needed the ability to implement code, run tests, and flag failures. Giving it the close button without a verification gate was like handing someone a loaded gun and asking them to open a letter. Provide exactly the tools the task requires. Sandbox the rest. The point isn’t to cripple the agent — it’s to make the right action easy and the wrong action hard.

3. Run a fire drill

Give your agent an ambiguous task with incomplete context. Something that should make it ask a clarifying question or flag uncertainty. Then watch what it actually does.

Most agents don’t ask. They guess. Confident-sounding output from insufficient input — classic. That’s the exact failure mode from my overnight disaster. The agent hit merge conflicts and instead of escalating, it just closed the issues. If your agent doesn’t escalate when it’s confused, you’ve learned something important about your harness — you don’t have one where it matters most.

What’s Coming

So that’s the road — or at least the first three traffic lights. The harness keeps you honest. But it doesn’t make you productive.

The overnight disaster taught me something bigger than “use your harness.” It taught me that any tool with friction will eventually get skipped — no matter how good it is. That insight didn’t just fix my harness. It changed how I think about every tool I use.

Next issue: I built a beautiful productivity app. Then I stopped opening it. Same disease, different patient. And the cure turned out to be the same — stop bolting AI onto old workflows and rebuild from scratch.

Technical Deep Dive: Harness Engineering: A Technical Reference — mechanisms, code patterns, open-source tools, and the full taxonomy of traffic lights.

References

Mitchell Hashimoto: Harness Engineering — Origin of the “harness engineering” concept

OpenAI: Building a Million-Line App — 1M+ lines, zero human-written code, harness as the bottleneck

Kapoor & Narayanan: AI Agent Reliability — Capability-reliability divergence

Stack Overflow Developer Survey 2024 — 76%+ AI tool adoption, trust in accuracy declining year-over-year

Haynes et al., NEJM: Surgical Safety Checklist — Checklist reduces mortality 47%

Andrej Karpathy on No Priors Podcast (March 2026) — “Manifest” as the new verb for directing agents